Global Standard-Based PV AI Practical Roadmap: CIOMS 7 Principles (Part 1)

페이지 정보

본문

On April 15, the ‘Oracle Life Sciences Safety Summit Asia’ held in Seoul brought together safety leaders and experts from global pharmaceutical and biotech companies to discuss the rapid transformation of the pharmacovigilance (PV) landscape and response strategies in depth. This content is based on the key presentation delivered by Minkyung Shin, CEO of SELTA SQUARE, titled [Reimagining Pharmacovigilance: Human-AI Interactive Intelligence], and summarizes global guidelines for the “responsible adoption of AI” together with practical implementation strategies.

PV Paradigm Shift: Why “Responsible AI” Matters Now

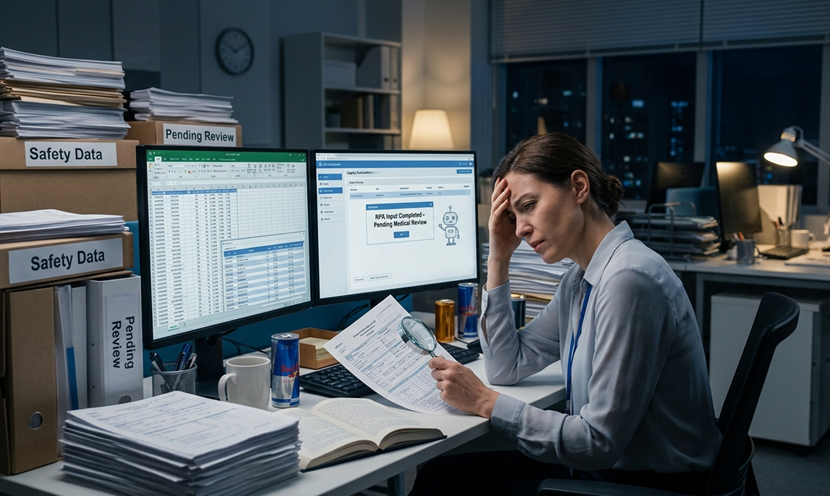

Today, pharmacovigilance (PV) is evolving beyond a “speed game” focused on submitting reports within regulatory timelines, into an “intelligence game” that identifies meaningful safety signals from an explosive volume of data. The surge in ICSRs (Individual Case Safety Reports) and real-world data (RWD) has reached a threshold that can no longer be managed by manpower alone, requiring a fundamental redesign of workflows through AI—not simply workforce expansion.

[Created by Gemini]

However, adopting automation alone is not sufficient. Many organizations that implement AI still face the “black box” problem, where the rationale behind outputs is unclear, forcing repeated manual reviews. In PV, where medical validity is essential, opaque outputs themselves become a new risk. As a result, whether AI-generated outputs are trustworthy—and how transparently the process is managed—has become a core competitive factor for organizations.

Against this backdrop, a key milestone is the CIOMS Working Group XIV report published in 2025. Developed by global experts from regulatory agencies, industry, and academia, this report establishes international consensus on the use of AI in PV. Beyond theoretical understanding, it provides actionable global standards for the responsible implementation of AI. Below is a practical reinterpretation of the CIOMS principles from SELTA SQUARE’s perspective, designed for immediate application in a rapidly evolving technological environment.

CIOMS WG XIV AI 7 Principles

(ref: Artificial Intelligence in Pharmacovigilance - CIOMS)

Principle 1. Risk-based Approach

Managing all AI systems under the same standards leads to inefficient resource allocation. Systems should therefore be governed differently depending on their impact on patient safety. For example, simple typo correction and final causality assessment carry different levels of risk, and resources should be allocated accordingly based on task criticality. In addition, establishing a Business Continuity Plan (BCP) is essential to ensure operational continuity in the event of unexpected system failures.

To enable more objective risk assessment, the CIOMS report proposes a “model risk matrix.” This framework evaluates both the autonomy of the AI system and the impact of its decisions, defining high-risk categories based on these two dimensions. Higher-risk areas require greater human oversight, enabling efficient supervision and optimal resource allocation. This approach not only clarifies the organization’s AI governance status but also serves as evidence of system safety and control for regulatory authorities.

Principle 2. Human Oversight

This principle is grounded in “human-centric AI design,” where AI is intended not to replace experts but to enhance their decision-making capabilities. To ensure reliability and accountability, systems must include clearly defined points of human intervention and critical validation from the design stage onward. Importantly, final decision-making authority—and accountability for outcomes—must always remain with humans. PV professionals are therefore expected to participate in system design, oversee operations, and serve as a bridge between technological systems and domain expertise.

Principle 3. Validity & Robustness

This principle ensures that AI systems operate accurately and consistently in real-world environments as intended. It goes beyond development-stage performance, focusing on system resilience across diverse and unexpected scenarios.

Supervisory approaches are generally divided into two types: HITL (Human-in-the-loop), where humans intervene during the process, HOTL (Human-on-the-loop), where humans review outputs after AI execution. The appropriate approach should be selected based on the risk and complexity of the task. Continuous validation through regular testing and monitoring is essential to maintain system reliability.

The principles of “risk-based approach,” “human oversight,” and “validity & robustness” form the foundational framework for implementing AI in pharmacovigilance. In the next part, we will explore the remaining CIOMS principles in depth, focusing on transparency and social responsibility, and complete the practical roadmap toward a truly sustainable pharmacovigilance system.